For a long time, making original music sat behind a wall of skills, software, and time. You either needed to compose, arrange, record, and edit by yourself, or you needed to hire people who could. That gap is exactly why tools like AI Music Generator have become easier to take seriously. They do not remove taste, judgment, or creative direction, but they do reduce the distance between an idea in your head and a listenable result. In my view, that shift matters most for people who already know what they want a song to feel like, yet do not want to spend days turning that idea into a draft.

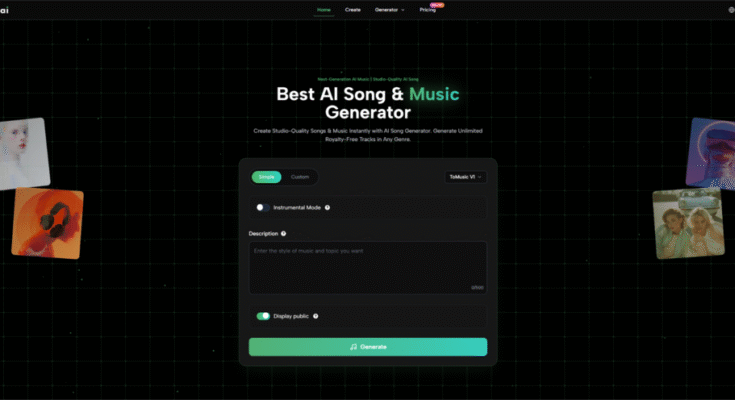

What makes this category interesting is not just speed. It is the way the workflow is being simplified into a few decisions that are intuitive even for non-musicians: what mood do you want, what kind of voice fits, do you want lyrics or only instrumental output, and how much control do you want over the final structure. When a platform presents those decisions clearly, the technology becomes less mysterious and more usable.

Why Fewer Friction Points Change Music Workflows

Music creation often slows down before it even starts. Many people can describe a sound, but cannot translate that description into melody, rhythm, arrangement, and vocal direction. A practical AI music workflow reduces that translation burden. Instead of asking users to think like producers from the first second, it lets them begin with language.

That matters for several types of users:

- content creators who need background songs quickly

- marketers who want branded music drafts

- indie developers testing mood for games or apps

- hobbyists exploring song ideas without studio skills

The important point is not that every output is instantly final. The real advantage is that a rough musical concept can appear quickly enough to evaluate. Once you can hear an idea, you can revise it. Before that, the idea remains vague.

How Input Design Shapes The Final Result

The difference between a weak music tool and a useful one is often not hidden in the model name. It is visible in the inputs. Based on the official interface and workflow, the system is built around a structured creation process rather than a single empty prompt box. That design choice has practical consequences because it gives users more than one way to guide the result.

Two Creation Modes Support Different Intentions

One useful part of the workflow is the distinction between a simpler generation route and a more controlled one.

Simple Mode Fits Fast Idea Testing

When the goal is speed, a lightweight description-first workflow makes sense. You describe the kind of track you want, then let the system interpret that request into a complete musical draft. For quick concepting, this is often the least intimidating path.

Custom Mode Fits Higher Creative Control

When the goal is precision, a more detailed mode becomes more valuable. In that setting, users can provide their own lyrics and guide the track with additional parameters such as style, mood, voice direction, and tempo. In my testing of similar workflows, this kind of structure usually produces outputs that feel more intentional because the model has fewer gaps to fill on its own.

Input Categories Matter More Than Prompt Length

A long prompt is not always a better prompt. What matters more is whether the platform lets the user specify the right dimensions. The official workflow suggests several important control points:

| Input Element | What It Helps Define | Why It Matters |

| Description | Overall song intent | Gives the system a creative target |

| Title | Framing and identity | Helps organize the output idea |

| Style | Genre direction | Narrows sonic expectations |

| Mood | Emotional tone | Shapes listener perception |

| Voice | Vocal character | Influences the human feel |

| Tempo | Energy and pacing | Affects movement and rhythm |

| Lyrics | Verbal content | Increases narrative control |

| Instrumental Option | Vocal absence | Useful for background scoring |

This is a stronger approach than relying on one sentence alone. A user who knows very little about production can still make meaningful decisions if those decisions are broken into understandable fields.

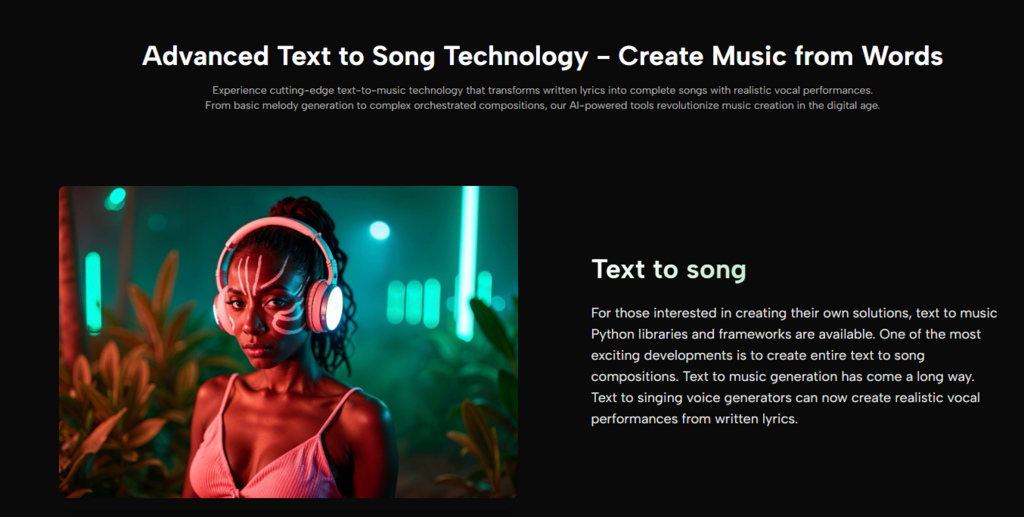

What The Generation Process Appears To Be Doing

From the official description, the platform interprets user input by identifying musical attributes such as genre, mood, tempo, instrumentation, and related stylistic cues, then uses that information to generate a full song or music draft. In practical terms, that means the system is not merely matching keywords. It is trying to map textual intent into musical structure.

That is where expectations need to stay realistic. AI music systems can produce impressive drafts, but results still depend heavily on clarity of input. If your direction is vague, the output may sound generic. If your direction is too crowded, the result may feel confused. The best outcomes usually come from prompts and settings that describe one strong creative lane rather than five competing ones.

A Practical Three-Step Creation Path

The official workflow can be understood as a short sequence rather than a complex studio process. That is part of its appeal.

Step One Starts With Creative Direction

The first step is choosing how you want to create: a simpler text-driven route or a more detailed custom route. At this stage, the real task is deciding whether you want speed or control. Users can also define core attributes like style, mood, title, and whether the track should be instrumental.

Step Two Adds Lyrics Or Detailed Guidance

If you want more authorship over the song, this is the stage where writing matters most. You can provide your own lyrics and shape the musical direction through clearer settings. For many users, this is where a tool shifts from novelty to usefulness, because the result begins to reflect specific intent rather than just generic genre imitation. That is also where a workflow built around Lyrics to Song becomes more meaningful for people who already have words but not yet a finished musical form.

Step Three Generates And Stores The Output

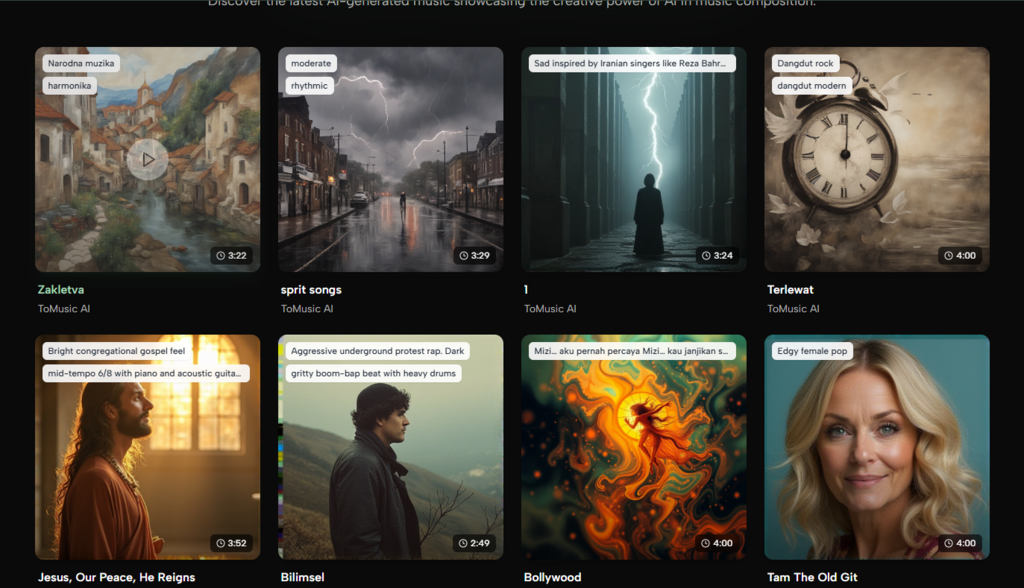

Once the inputs are set, the song is generated and then saved into the platform’s music library or studio area for later review. That storage layer matters more than it first appears. Music generation is rarely a one-try process. Being able to compare versions, revisit earlier drafts, and decide what is worth keeping is part of the real creative workflow.

Why Model Choice Can Affect User Experience

Another notable part of the platform is its multi-model structure. Instead of presenting one fixed engine, it offers different model generations with different strengths. The official positioning suggests that some versions focus more on speed and balanced output, while later versions emphasize more realism, vocal expression, or richer composition.

From a user perspective, this matters for a simple reason: not every music task has the same goal.

Fast Drafting And Richer Production Are Different Needs

A creator making ten quick concept tracks for social content does not need the exact same thing as someone developing a full-length vocal piece. One may prefer speed and iteration. The other may care more about expressive vocals, layered arrangement, or longer duration. A multi-model setup gives room for those different priorities.

Earlier Models Can Favor Frequency And Speed

For rapid experimentation, faster and lighter models are often enough. They help users test directions without investing too many credits or too much time on each attempt.

Later Models Can Favor Detail And Realism

For more polished work, later models may be better suited when the goal is stronger vocal presence, more developed harmonies, or a fuller arrangement. In my view, that kind of choice architecture makes the tool feel more like a creative system and less like a one-button gimmick.

Where The Tool Feels Most Useful In Practice

The strongest use cases are not always the most glamorous ones. In everyday work, a platform like this seems especially practical in scenarios where original music is needed but traditional production is too slow or expensive.

Content Teams Need Fast Musical Direction

Short-form video, branded clips, explainers, and demos often need music that fits a mood without requiring a full production cycle. A fast drafting workflow can help teams move from silence to usable atmosphere quickly.

Writers And Creators Can Prototype Song Ideas

People who write lyrics, poetry, hooks, or emotional concepts often struggle at the melody stage. A system that turns written content into song drafts creates a bridge between text thinking and audio thinking.

Independent Builders Benefit From Faster Testing

Game makers, app designers, and product teams often need temporary music to test emotional tone. A generated draft can be enough to evaluate whether a scene feels tense, playful, calm, or cinematic before spending more on final assets.

What Still Limits The Experience Today

A realistic article should not pretend the process is perfect. AI music tools are powerful, but they still require good direction and patient iteration.

Prompt Quality Still Shapes Output Quality

The model can only respond to what it is given. Weak wording, conflicting emotional cues, or overly broad instructions often lead to weaker songs. The tool lowers the barrier to making music, but it does not eliminate the need for judgment.

Multiple Generations May Still Be Necessary

Even with better controls, the first result is not always the best result. In my experience, music generation works best when users expect to refine rather than instantly finish. That mindset leads to better outcomes and less disappointment.

Creative Identity Still Depends On The User

The platform can generate sound, structure, and performance-like output, but the strongest results still come from human decisions: what emotional lane to choose, which lyrics are worth using, what style should be avoided, and when a draft is good enough to keep.

Why This Matters Beyond The Tool Itself

The bigger story is not only about one platform. It is about how music creation is being restructured into accessible creative decisions. When software turns intimidating production steps into readable choices, more people can participate in making songs. That does not replace musicianship. It changes who gets to begin.

For that reason, I see tools like this as most valuable not when they promise magic, but when they help people move from intention to audible draft with less friction. That is a modest promise, but a meaningful one. And in creative technology, modest promises that actually work tend to matter more than louder claims.